Auditory Development Lab

COVID-19 UPDATE - MAY 2022

We are pleased to announce that we are able to resume some of our research studies!

We are strictly following the cleaning and PPE safety protocols set in place by McMaster University and the Government of Ontario.

We appreciate the continued support and interest in our research from so many participants!

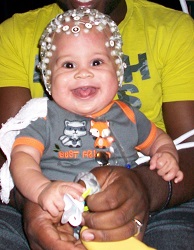

In the Auditory Development Lab we study the perception of sound in infants, children, and adults, as well as the acquisition of music and language. We are interested in what infants perceive when they listen to speech and music, how this changes as they grow, and what influences how sound perception develops. We use both behavioural and brain imaging (EEG, MEG) techniques to answer such questions as:

- Why do caregivers talk and sing to infants who don't understand the words?

- Can infants recognize tunes? If so, what aspects of the musical structure do they encode?

- How do infants perceive pitch, pitch patterns, and melodies?

- How is rhythm perceived?

- What is the multisensory relation between music and movement?

- How does musical training affect brain development?

Participate in one of our Studies

Join our Developmental Research Participant Data Base!

Complete a 2 minute survey to participate in our research studies

Click here

Click here

What to expect if you are a child participating in one of our studies